11 minutes

Kubernetes for Developers: A Refresher

1. Introduction

Kubernetes has become the de facto standard for container orchestration in modern cloud-native development. But what exactly is Kubernetes, and why is it so important for developers today?

Kubernetes is an open-source platform designed to automate deploying, scaling, and managing containerized applications. It abstracts away the complexity of infrastructure, allowing teams to focus on building and shipping features rather than worrying about how their applications run in production.

The problems Kubernetes solves are numerous: it handles service discovery, load balancing, automated rollouts and rollbacks, self-healing, and resource management. Before Kubernetes, developers often had to manually configure servers, manage dependencies, and handle scaling challenges. With Kubernetes, these tasks are declarative and automated, making development workflows more efficient and reliable.

For developers, understanding Kubernetes is crucial, not just for deploying applications, but for designing software that is resilient, scalable, and cloud-ready. Whether you’re working on microservices, APIs, or web apps, Kubernetes empowers you to build solutions that can run anywhere, from your laptop to massive cloud clusters.

In this refresher, we’ll revisit the core concepts, architecture, and best practices of Kubernetes, focusing on what developers need to know to be effective in today’s cloud-driven landscape.

2. Kubernetes Core Concepts

Kubernetes introduces a set of abstractions and principles that are essential for building, deploying, and managing modern applications.

Containers vs Pods: The Kubernetes Abstraction

While containers package your application and its dependencies, Kubernetes takes it a step further with Pods. A Pod is the smallest deployable unit in Kubernetes and can contain one or more containers that share storage, networking, and a specification for how to run the containers. Pods enable co-located processes to communicate efficiently and are the foundation for higher-level objects like Deployments and Services.

Declarative vs Imperative Configuration

Kubernetes supports two main approaches to managing resources:

- Imperative: You tell Kubernetes what to do, step by step, using commands like

kubectl runorkubectl create. - Declarative: You define the desired state in YAML manifests, and Kubernetes works to make the cluster match that state. This approach is preferred for production, as it enables version control, repeatability, and automation.

kubectl Usage

kubectl is the command-line tool for interacting with your Kubernetes cluster. It allows you to inspect resources, apply configurations, scale deployments, and troubleshoot issues. Mastering kubectl is key for any developer working with Kubernetes.

YAML Manifests

Kubernetes resources are typically defined in YAML files. These manifests describe the desired state of objects like Pods, Services, Deployments, and more. By applying these files, you instruct Kubernetes to create and manage resources according to your specifications.

Understanding these core concepts is the foundation for working effectively with Kubernetes, whether you’re deploying a simple web app or architecting a complex microservices system.

3. Kubernetes Architecture: A Developer’s Perspective

Kubernetes architecture is designed to provide reliability, scalability, and flexibility for running containerized applications. Understanding its main components helps developers design and troubleshoot their workloads effectively.

The Control Plane

The control plane is the brain of the Kubernetes cluster. It manages the overall state, scheduling, and orchestration of workloads. Key components include:

- API Server: The front door to the cluster, handling all REST requests and serving as the main interface for users and tools.

- Scheduler: Assigns newly created pods to nodes based on resource availability and constraints.

- Controller Manager: Runs controllers that handle routine tasks, such as replicating pods, managing endpoints, and handling node failures.

Worker Nodes

Worker nodes are where your application containers actually run. Each node includes:

- kubelet: The agent that communicates with the control plane, ensuring containers are running as specified.

- Container Runtime: The software responsible for running containers (e.g., Docker, containerd).

- kube-proxy: Manages networking rules, enabling communication between pods and services within and outside the cluster.

How They Interact

The control plane continuously monitors the cluster and makes decisions to maintain the desired state. When you deploy an application, the API server receives the request, the scheduler places pods on appropriate nodes, and the controller manager ensures everything stays in sync. Worker nodes report status and execute instructions, while kube-proxy and the container runtime handle networking and container lifecycle.

This separation of responsibilities allows Kubernetes to scale efficiently and recover from failures, making it a robust platform for modern application development.

4. Pods and Services

Kubernetes uses Pods and Services as fundamental building blocks for running and exposing applications.

What is a Pod?

A Pod is the smallest deployable unit in Kubernetes. It can contain one or more containers that share the same network namespace and storage volumes. Pods are ephemeral by design—if a Pod dies, Kubernetes can automatically create a new one to maintain the desired state. This abstraction allows you to group tightly coupled processes and manage them as a single unit.

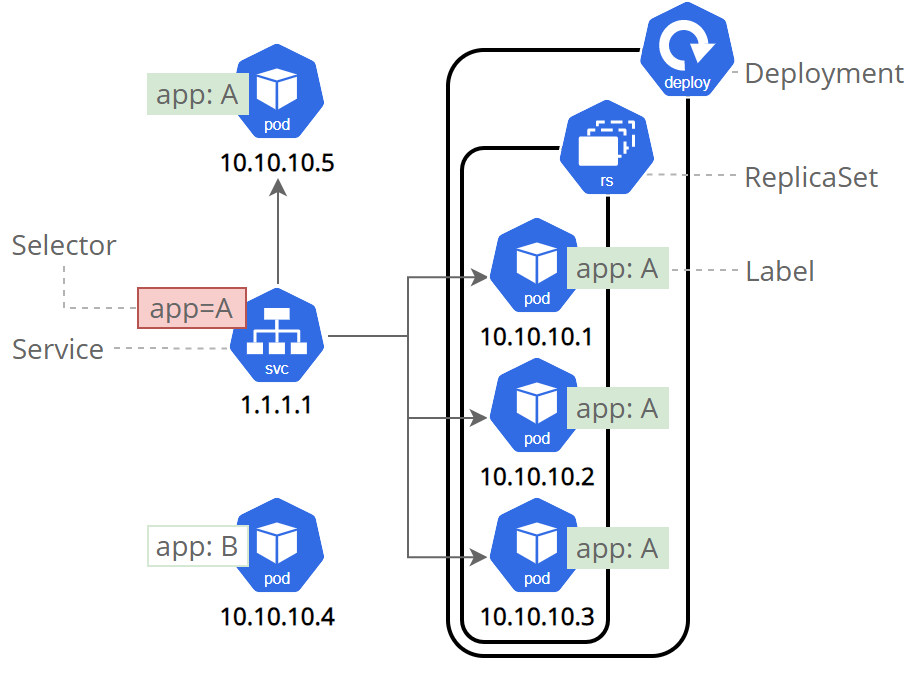

ReplicaSets and Deployments

ReplicaSets ensure that a specified number of Pod replicas are running at any given time. Deployments build on ReplicaSets, providing declarative updates to Pods and ReplicaSets. With Deployments, you can roll out changes, roll back to previous versions, and scale your application seamlessly.

What is a Service?

Services provide stable networking endpoints for Pods, abstracting away the dynamic nature of Pod IP addresses. There are several types of Services:

- ClusterIP: Exposes the Service on a cluster-internal IP, making it accessible only within the cluster.

- NodePort: Exposes the Service on a static port on each node, allowing external access via

<NodeIP>:<NodePort>. - LoadBalancer: Provisions an external load balancer (in supported environments) to route traffic to your Service.

Internal Networking

Kubernetes manages internal networking so that Pods can communicate with each other and with Services. DNS is automatically configured for Service discovery, making it easy to connect microservices.

Namespaces for Separation and Organization

Namespaces allow you to divide cluster resources between multiple users or teams, providing isolation and organization for development, testing, and production environments.

Mastering Pods and Services is essential for deploying resilient, scalable applications in Kubernetes.

5. Exposing Applications

Exposing applications in Kubernetes is a critical step for making your services accessible to users, whether inside or outside your cluster.

Ingress vs LoadBalancer

There are two main ways to expose services externally: LoadBalancers and Ingress resources. A LoadBalancer service provisions an external load balancer (in supported cloud environments), providing a single IP address for your service. This is simple but can be costly and less flexible for complex routing.

Ingress is a more advanced option, acting as a smart HTTP(S) router. It allows you to define rules for routing traffic to different services based on hostnames or paths. Ingress resources require an Ingress Controller, such as NGINX or Traefik, which manages the routing and provides features like SSL termination and URL rewrites.

Do I Need an External LoadBalancer?

For simple use cases or cloud environments, a LoadBalancer service may be sufficient. For more complex scenarios—such as hosting multiple services under one domain, or needing advanced routing, Ingress is the preferred solution.

Role of Ingress Controllers

Ingress Controllers are responsible for implementing the rules defined in your Ingress resources. Popular options include NGINX, Traefik, and HAProxy. They run inside your cluster and manage traffic flow, security, and scalability for exposed services.

DNS Inside the Cluster

Kubernetes automatically configures DNS for all Services and Pods, making service discovery seamless. You can refer to services by name, and the cluster’s DNS system will resolve them to the correct internal IP addresses.

Choosing the right exposure strategy is essential for balancing accessibility, security, and cost in your Kubernetes deployments.

6. Application Configuration

Configuring applications in Kubernetes is about separating code from configuration and managing sensitive data securely.

ConfigMaps: Externalize App Configuration

ConfigMaps allow you to store non-sensitive configuration data—such as environment variables, command-line arguments, or configuration files—outside your application image. This makes your deployments more flexible and portable, as you can change configuration without rebuilding containers.

Secrets: Managing Sensitive Data

Kubernetes Secrets are designed for storing sensitive information like passwords, API keys, and certificates. Secrets are base64-encoded and can be encrypted at rest by the cluster, providing an extra layer of security. You can mount Secrets as environment variables or volumes, ensuring your applications can access them securely without hardcoding sensitive values.

Encryption at Rest

For production clusters, enable encryption at rest for Secrets to protect data even if someone gains access to the underlying storage.

Mounting Secrets and ConfigMaps

Both ConfigMaps and Secrets can be mounted into Pods as environment variables or files, allowing your applications to read configuration and secrets at runtime. This approach supports twelve-factor app principles and makes your workloads more adaptable.

Proper configuration management is key to building secure, maintainable, and scalable applications in Kubernetes.

7. Resource Management

Efficient resource management in Kubernetes ensures your applications run reliably and cost-effectively, even as demand fluctuates.

Requests and Limits: CPU and Memory

Kubernetes lets you specify resource requests (minimum guaranteed resources) and limits (maximum allowed resources) for each container. This helps the scheduler place Pods on nodes with enough capacity and prevents any single container from consuming excessive resources, protecting cluster stability.

Quality of Service (QoS) Classes

Based on resource requests and limits, Kubernetes assigns Pods to QoS classes: Guaranteed, Burstable, or BestEffort. These classes influence how resources are allocated and which Pods are prioritized during resource contention or node pressure.

Horizontal Pod Autoscaler (HPA)

HPA automatically scales the number of Pod replicas based on observed CPU utilization or other metrics. This enables your application to handle variable workloads without manual intervention.

Vertical Pod Autoscaler (VPA)

VPA adjusts the resource requests and limits for Pods automatically, helping optimize resource usage as application demands change over time.

Cluster Autoscaler

For cloud environments, the Cluster Autoscaler can automatically add or remove nodes in your cluster based on overall resource needs, ensuring you only pay for what you use while maintaining performance.

Mastering resource management is key to building resilient, scalable, and cost-effective applications in Kubernetes.

8. Deployment Strategies

Kubernetes supports several deployment strategies to help you release updates safely and efficiently.

Rolling Updates

The default strategy in Kubernetes, rolling updates gradually replace old Pods with new ones, ensuring zero downtime. This approach is ideal for most production workloads, as it allows you to monitor the rollout and roll back if issues arise.

Recreate

The recreate strategy stops all existing Pods before starting new ones. While simple, it causes downtime and is rarely used for critical applications.

Blue/Green Deployments

This strategy involves running two environments (blue and green) simultaneously. You switch traffic from the old (blue) to the new (green) version once it’s ready. Blue/green deployments minimize risk and make rollbacks easy, but require more resources.

Canary Deployments

Canary deployments release updates to a small subset of users or Pods before rolling out to the entire cluster. This allows you to test new features in production and catch issues early. Tools like Argo Rollouts and Flagger can automate canary deployments in Kubernetes.

Choosing the right deployment strategy depends on your application’s requirements for availability, risk tolerance, and resource usage.

9. Environment Management

Managing multiple environments (dev, test, prod) in Kubernetes is streamlined with tools like Kustomize and Helm.

Kustomize: Overlay Configurations for Different Environments

Kustomize is built into kubectl and allows you to customize Kubernetes YAML manifests for different environments using overlays. You can reuse base configurations and apply environment-specific changes without duplicating files.

Helm: Kubernetes Package Manager

Helm is a powerful package manager for Kubernetes, enabling you to define, install, and upgrade complex applications using charts. Helm supports templating, versioning, and dependency management, making it ideal for managing large-scale deployments.

Both Kustomize and Helm help you maintain consistency, reduce errors, and simplify configuration management across environments.

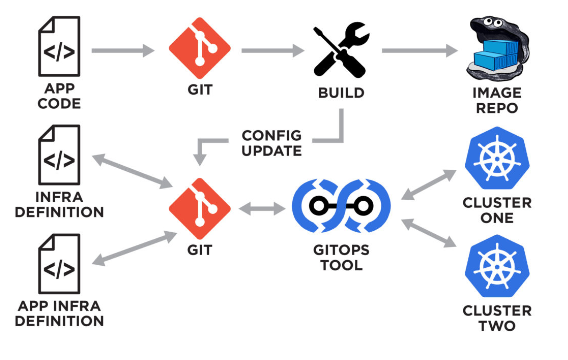

10. Infrastructure as Code

Infrastructure as Code (IaC) brings automation, repeatability, and version control to your Kubernetes infrastructure.

Using Terraform with Kubernetes

Terraform is a popular IaC tool that lets you define cloud resources and Kubernetes clusters using declarative configuration files. You can manage clusters on AWS (EKS), Google Cloud (GKE), and Azure (AKS), as well as provision node groups, networking, and storage.

Terraform can also deploy Kubernetes resources directly, allowing you to manage both infrastructure and application workloads from a single source of truth. This approach streamlines operations, reduces manual errors, and makes it easy to reproduce environments.

Adopting IaC with Terraform empowers teams to scale, audit, and maintain Kubernetes environments efficiently.

11. Monitoring and Logging

Monitoring and logging are essential for understanding application health, performance, and troubleshooting issues in Kubernetes.

Logs: kubectl logs

You can view container logs using kubectl logs, which is invaluable for debugging and tracking application behavior. For production, consider centralized logging solutions like Elasticsearch, Fluentd, and Kibana (EFK stack).

Metrics Server

The Metrics Server collects resource usage data (CPU, memory) from nodes and Pods, enabling features like autoscaling and resource monitoring. It’s lightweight and easy to install.

Prometheus and Grafana

Prometheus is a powerful monitoring system that scrapes metrics from Kubernetes and application endpoints. Grafana provides rich dashboards for visualizing metrics, helping you spot trends and anomalies quickly.

Implementing robust monitoring and logging ensures your Kubernetes workloads are reliable, secure, and easy to maintain.

13. Final Tips and Best Practices

To wrap up, here are some essential tips and best practices for working with Kubernetes as a developer:

- Use liveness and readiness probes: These health checks help Kubernetes detect when your application is ready to serve traffic or needs to be restarted. Proper probes improve reliability and reduce downtime.

- Keep secrets and configs separate: Store sensitive data in Secrets and configuration in ConfigMaps. Never hardcode credentials or configuration values in your application images.

- Monitor resource usage early: Set resource requests and limits for all containers, and use monitoring tools to track usage. This prevents resource contention and helps you scale efficiently.

- Use namespaces to organize dev/test environments: Namespaces provide isolation and organization, making it easier to manage multiple environments and avoid conflicts.

By following these practices, you’ll build secure, maintainable, and scalable applications that take full advantage of Kubernetes’ capabilities.